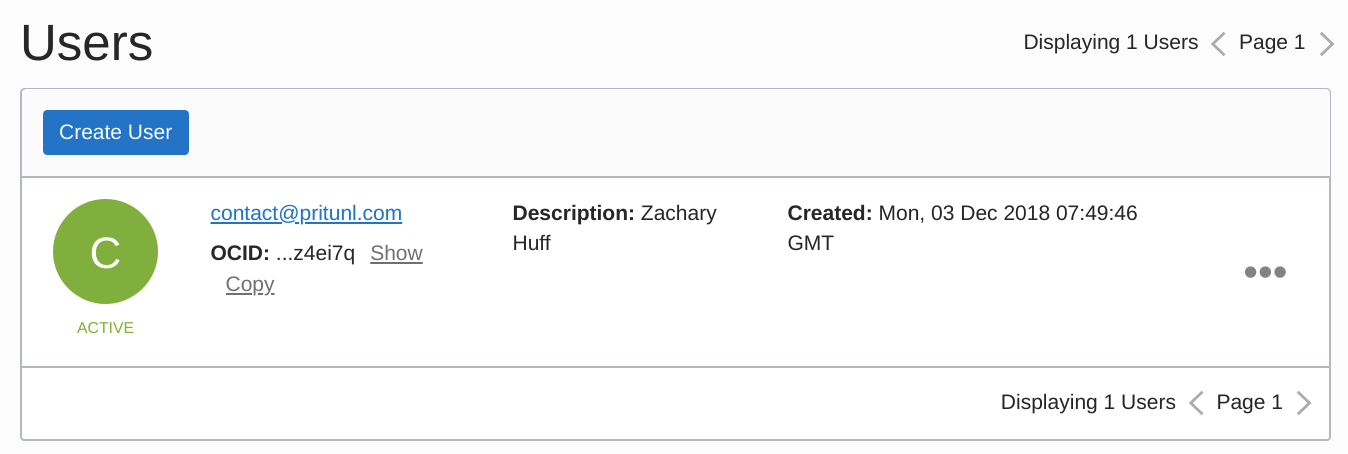

They are simple, so I’ll not explain them. #Pritunl api key install#Dockerfile FROM ubuntu:18.04 RUN apt update COPY -chown=root:root RUN bash /root/install.sh COPY start.sh /root/ EXPOSE 80 EXPOSE 443 EXPOSE 1999 CMD // install.sh set -ex RUN apt install -y gnupg2 echo 'deb bionic main' > /etc/apt//pritunl.list apt-key adv -keyserver hkp:// -recv 7568D9BB55FF9E5287D586017AE645C0CF8E292A apt update apt install -y pritunl iptables // start.sh set -ex pritunl set-mongodb mongodb://mongo-statefulset-0.mongo-svc,mongo-statefulset-1.mongo-svc,mongo-statefulset-2.mongo-svc/pritunl?replicaSet=rs0 pritunl set app.redirect_server true pritunl set app.server_ssl true pritunl set app.server_port 443 pritunl set app.reverse_proxy true pritunl start I couldn’t find an official image, so I built it from ubuntu:18.04. #Pritunl api key password#We don’t need to set a password because the client out of the k8s cluster cannot access them. $ kubectl exec -it mongo-statefulset-0 - /bin/bash mongo > rs.initiate( )Īfter running this command, they cooperate as a cluster. So let’s login one of them and wake them up. Now, we have 3 pods with static DNS, storage. = RollingUpdate, so pods will be updated one by one, and we can keep the availability of the DB cluster. the Document I referred mentions about ordered rolling updates, how will they manage it? By default, the setting will be. About 5, we can select how many pods can Read/Write this disk, but normally ReadWriteOnce will be fine.Īnd one more thing. And 4 chose the Storage Class we(GKE) prepared. As I checked this API document, this is required to set their DNS name when they are created.Ģ specified which persistent volume will pods use, and definition is on 3. I don’t wanna explain each line, so let’s pick up some of them.ġ specified serviceName. apiVersion: apps/v1 kind: StatefulSet metadata: name: mongo-statefulset spec: replicas: 3 selector: matchLabels: app: mongo serviceName: "mongo-service" # 1 template: metadata: labels: app: mongo spec: terminationGracePeriodSeconds: 10 containers: - name: mongo image: mongo:4.2 resources: requests: memory: "200Mi" command: - mongod - "-replSet" - rs0 - "-bind_ip" - "0.0.0.0" ports: - containerPort: 27017 volumeMounts: - name: mongo-persistent-storage # 2 mountPath: /data/db volumeClaimTemplates: - metadata: name: mongo-persistent-storage # 3 annotations: storageClassName: "standard" # 4 spec: accessModes: - ReadWriteOnce # 5 resources: requests: storage: 30Gi So, using StatefulSet, each pod will have a static name & storage. Ordered, graceful deployment and scaling. It means we need to use a static pod name. I already mention that each MongoDB pod should have its own DNS name, and Headless Service will prepare DNS name like. OK, the only thing we should do is to define MongoDB pods. If the pod’s name is mongo-statefulset-0, the DNS name will be mongo-statefulset-0.mongo-svc. To do so, each MongoDB pod will have a specific DNS name. You can see almost parts are same as normal Service, but use clusterIP: None and not select type.

apiVersion: v1 kind: Service metadata: name: mongo-svc spec: ports: - port: 27017 targetPort: 27017 clusterIP: None selector: app: mongo Using Headless Service, each container connected to the service can have a static DNS name like. Because MongoDB clients need to identify which server is master/replica node. this is a type of Service resource, but not do load balancing. Then, to enable Pritunl containers to access the MongoDB cluster, we’ll need to create Headless Service. If you want to use an SSD or custom replication setting, you can create a custom Storage class. On GKE, they already have standard Storage Class, so I’ll use it. this is a type of k8s resources and it’ll manage persistent disk setting.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed